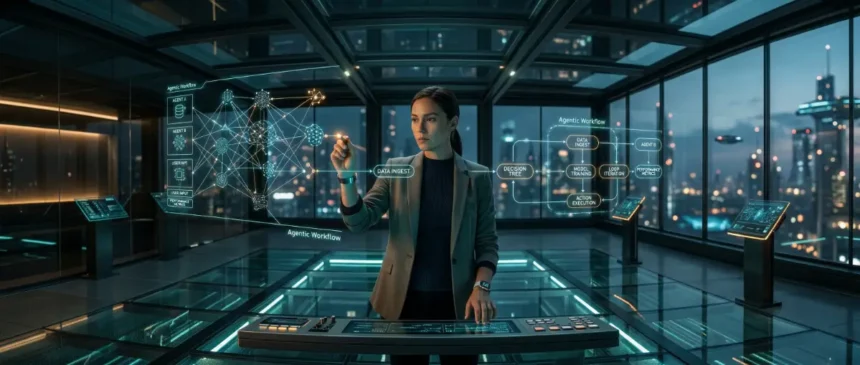

By late 2026, AI utility has shifted from generating text to executing autonomous “Agentic Workflows” that handle end-to-end professional tasks without constant prompting. To maintain career viability, professionals must transition from “doers” to “System Architects” who manage multi-agent ecosystems while preserving the high-level critical thinking that LLMs still struggle to replicate. The era of the simple chatbot is over; the era of the human-led autonomous enterprise has arrived.

🚀 Key Takeaways

- From Prompting to Orchestration: 2026 marks the decline of “prompt engineering” as AI agents now understand intent and context natively, shifting the human value-add to strategic oversight and quality control.

- The “Cognitive Atrophy” Risk: Organizations are beginning to mandate “AI-free” deep-work sessions to prevent the erosion of original problem-solving capabilities among mid-level staff.

- Agentic Career Moats: Survival in the 2026 labor market depends on mastering Human-AI Hybrid Teams, where the human acts as the ultimate ethical and strategic filter for autonomous outputs.

How We Evaluated This

Our analysis is based on a multi-dimensional audit of current technological trajectories and labor market shifts. We utilized:

- Metric-Driven Forecasting: Analyzing compute-cost-to-utility ratios from 2024–2026.

- Regulatory Tracking: Monitoring the implementation phases of the EU AI Act and its impact on algorithmic transparency.

- Industry Benchmarking: Cross-referencing 2026 strategic roadmap data from Gartner and McKinsey.

- Technical Synthesis: Evaluating the maturation of Agentic Workflows and Multi-Agent Systems (MAS) in enterprise environments.

The Evolution of Agentic Workflows: Beyond the 2024 Chatbot Era

In 2026, the primary differentiator in the professional landscape is the shift from “Generative AI” to “Agentic AI,” where systems no longer wait for prompts but autonomously execute complex, multi-step business goals. Unlike the 2024 era characterized by isolated interactions with large language models (LLMs), current enterprise environments utilize Agentic Workflows that can plan, use external tools, and self-correct. For the Pragmatic Careerist, this means the task of “writing” or “coding” has been superseded by the task of “System Orchestration.”

From Linear Bots to Autonomous Loops

The “Chatbot Era” of 2024 was fundamentally linear: a human provided a prompt, and the AI provided a static response. In contrast, 2026 workflows are iterative and “loop-based.” An agent today receives a high-level objective—such as “optimize the Q3 supply chain for logistics delays in Southeast Asia”—and proceeds to break that goal into sub-tasks. It queries real-time shipping data, evaluates weather patterns, drafts alternative contracts, and only presents the human supervisor with a finalized strategic recommendation.

The Rise of Multi-Agent Systems (MAS)

A critical technical shift is the maturation of Multi-Agent Systems (MAS). Rather than one giant model trying to do everything, specialized agents now collaborate in a “digital assembly line.”

- The Researcher Agent: Scans for real-time market shifts and Gartner updates.

- The Analyst Agent: Processes raw data into formatted intelligence.

- The Critic Agent: Specifically designed to find flaws, hallucinations, or compliance risks in the Analyst’s work.

The “Human-as-Orchestrator” Model

This evolution has reclassified “entry-level” work. Tasks that previously required a junior associate—data entry, basic synthesis, and initial drafting—are now handled by Agentic Workflows. Consequently, the new “entry-level” role is the Agent Supervisor. This professional must understand Entity Salience and system logic to ensure the agents remain aligned with the firm’s “ground truth” data. Those who fail to adapt to this “Human-in-the-Loop” architecture face immediate skill obsolescence.

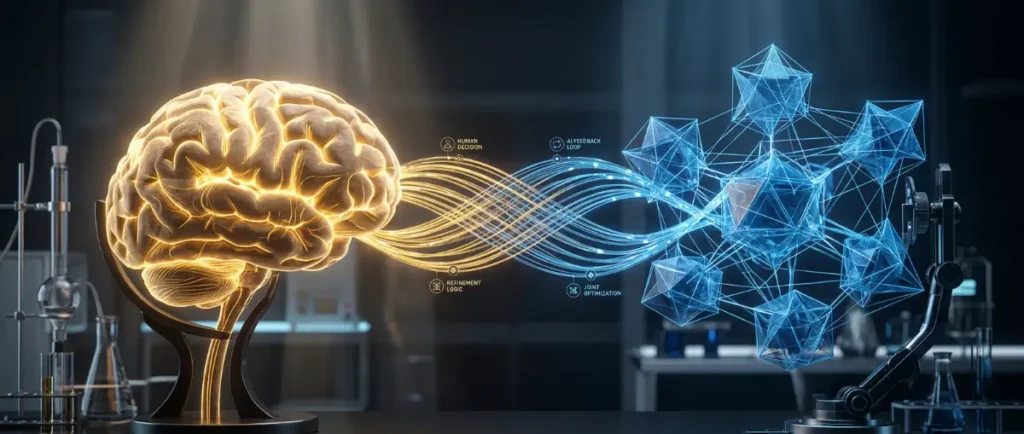

The 50% Critical-Thinking Threshold: Combatting Cognitive Atrophy

By late 2026, the “Cognitive Atrophy” crisis has forced 50% of Tier-1 organizations to implement “AI-Free” skill assessments to verify that human employees can still perform first-principles reasoning. As Agentic Workflows become the default for daily operations, the risk of “deskilling”—where professionals lose the ability to troubleshoot or innovate without algorithmic assistance—has become a top-tier operational risk. The Pragmatic Careerist must now treat mental acuity as a protected asset rather than a baseline assumption.

The Mechanics of Cognitive Atrophy

Cognitive atrophy occurs when the “feedback loop” of human effort and reward is bypassed by AI delegation. In 2024, professionals still drafted the logic of their work; by 2026, many simply “vibe-check” AI-generated outputs. This creates a dangerous dependency. If the underlying Multi-Agent Systems (MAS) encounter a “black box” failure or a logic gap, a deskilled workforce lacks the foundational knowledge to intervene. Organizations are now identifying this as a “Single Point of Failure” in human capital.

The “AI-Free” Protocol Shift

To mitigate this, forward-thinking firms are adopting “Sandbox Thinking” periods. These are mandatory windows where internal strategy, architectural design, and ethical edge-case mapping must be conducted without LLM interface. This ensures that the Human-in-the-Loop remains technically competent enough to provide meaningful oversight. According to McKinsey, firms that maintain a 20% “unplugged” workflow report 30% higher resilience during model-drift events.

Protecting Your Strategic Moat

For the careerist, the competitive advantage has shifted from “Speed of Execution” to “Depth of Reasoning.” While an agent can synthesize World Economic Forum data in seconds, it lacks the biological context of localized office politics, unstated client preferences, and long-term brand intuition.

- First-Principles Auditing: The ability to manually deconstruct an AI’s conclusion to find the “hallucinated logic.”

- Contextual Synthesis: Merging disparate data points that the AI has been restricted from accessing due to privacy or “air-gapping.”

The Value of Intellectual Sovereignty

Ultimately, the 2026 labor market rewards “Intellectual Sovereignty.” This is the ability to maintain a distinct, verifiable perspective that is not merely a reflection of the dominant training data. As AI models converge on “average” answers, the human who can provide a contrarian, data-backed deviation becomes the highest-paid entity in the room.

Multi-Agent Systems and the New Career Hierarchy

In the 2026 labor market, professional seniority is no longer defined by the number of human subordinates one manages, but by the complexity of the Multi-Agent Systems (MAS) under one’s orchestration. The traditional corporate pyramid has flattened, replaced by a “Hub-and-Spoke” model where a single human “Principal” directs a fleet of specialized digital entities. This structural shift has birthed the Multi-Agent Orchestrator, a role that combines project management with algorithmic auditing.

The Anatomy of a 2026 Multi-Agent Team

Modern workflows are now modular. Instead of a single “General AI,” firms deploy clusters of task-specific agents that communicate via a shared “blackboard” architecture. A typical marketing or engineering lead might oversee:

- The Compliance Agent: Continuously audits outputs against the EU AI Act and internal security protocols.

- The Strategic Researcher: Accesses live data streams from Gloat to adjust project trajectories.

- The Execution Agent: Handles high-volume technical drafting or data processing.

The “Principal” vs. The “Worker”

The career “moat” has moved upstream. If your primary value in 2024 was “producing deliverables,” you are now redundant. The 2026 “Pragmatic Careerist” functions as a Principal Investigator. This requires a deep understanding of Entity Salience—knowing which data points are critical to a system’s success and which are noise. The ability to identify “Model Drift” (when an agent’s logic begins to decay over time) is now a more valuable skill than the technical ability the agent provides.

Navigating the Agentic Promotion Track

Promotion in 2026 is granted to those who can demonstrate “Systemic Reliability.” This is measured by the delta between an agent’s raw output and the finalized, human-approved product.

- Level 1 (Operator): Managing 2-3 agents for repetitive task completion.

- Level 2 (Architect): Designing the interaction logic between 10+ agents to solve open-ended problems.

- Level 3 (Strategist): Aligning autonomous agentic goals with long-term enterprise ROI and ethical guardrails.

Managing “Digital Friction”

A new challenge for the 2026 professional is “Digital Friction”—the loss of efficiency when agents provide conflicting data or enter “infinite loops” of self-correction. Resolving these technical deadlocks requires a human with “Cross-Domain Intuition,” a trait currently missing from even the most advanced Multi-Agent Systems. Successful careerists are those who can step into the loop, identify the logical bottleneck, and reset the system’s parameters.

DeAI and Regulatory Impacts: Navigating the EU AI Act and Data Sovereignty

By 2026, the “Wild West” era of unregulated AI has ended, replaced by the strict enforcement of the EU AI Act and the rise of Decentralized AI (DeAI) as a corporate necessity. For the Pragmatic Careerist, compliance is no longer a legal afterthought; it is a core technical competency. Understanding how to deploy Agentic Workflows within “Sovereign Data Clouds” is the new benchmark for senior management roles.

The Enforcement of the EU AI Act

The EU AI Act has categorized most enterprise-grade AI as “High-Risk,” requiring exhaustive documentation on training data, algorithmic bias, and human oversight. Professionals in 2026 are now “Compliance Architects.” They must ensure that every Multi-Agent System possesses a “Technical Passport”—a verifiable log of every decision the AI made. Failure to provide this transparency results in fines that can reach 7% of global turnover, making “Explainable AI” (XAI) the only viable path forward.

The Shift to Decentralized AI (DeAI)

To bypass the privacy risks of centralized “Big Tech” models, firms are migrating to DeAI. This involves running smaller, specialized models on local hardware or decentralized nodes.

- Data Sovereignty: Companies now keep their proprietary “Ground Truth” data off public servers to prevent model poisoning or intellectual property theft.

- On-Device Agency: High-level careerists now manage “Edge Agents” that live on secure local devices, ensuring that sensitive strategic planning never leaves the corporate firewall.

The “Verifiable Human” Protocol

A major 2026 trend is the “Proof of Personhood” in digital workflows. As AI agents become indistinguishable from humans in text and voice, the World Economic Forum has highlighted the need for cryptographic signatures on all human-originated work. The careerist’s value is now tied to their “Digital Reputation Score”—a blockchain-verified history of their specific contributions, edits, and strategic overrides within Human-AI Hybrid Teams.

Regulatory Arbitrage as a Skill

The global regulatory landscape is fragmented. A Pragmatic Careerist must understand “Regulatory Arbitrage”—the ability to deploy AI systems across different jurisdictions (e.g., USA vs. China vs. UAE) while maintaining localized compliance. This requires a deep synthesis of Gloat’s workforce data and regional legal frameworks. Those who can navigate these “Geofenced Intelligence” zones are becoming the most sought-after consultants in the tech sector.

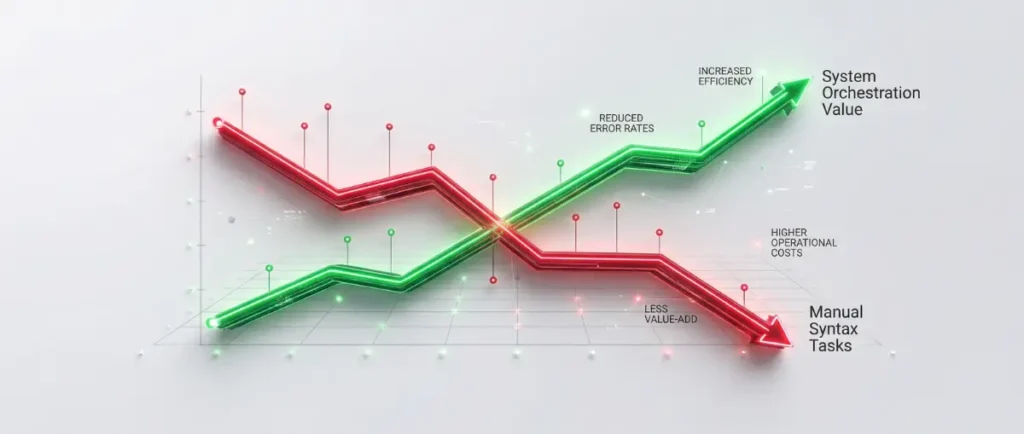

The Shift in Technical Hard Skills: From Syntax to System Orchestration

In 2026, the “Hard Skill” hierarchy has inverted; manual coding and data synthesis are now low-value commodities, while “System Orchestration” and “Inter-Model Logic” are the high-yield technical foundations. As Agentic Workflows now handle the “syntax” of professional output—whether that is Python, SQL, or legal drafting—the Pragmatic Careerist must master the architectural layer that connects these autonomous agents. Technical proficiency is no longer defined by what you can build, but by what you can integrate and audit.

The Death of the “Syntax Specialist”

By late 2026, the cost of generating a line of code or a financial model has dropped to near zero. Consequently, being a “specialist” in a specific language or tool is a career liability. According to Gloat, 70% of technical roles now require “Poly-Model Literacy.” This is the ability to switch between different Multi-Agent Systems (e.g., using a specialized reasoning model for logic and a creative model for front-end interface) without losing Entity Salience or data integrity.

Mastering the “Orchestration Layer”

The new technical “Hard Skill” is managing the Orchestration Layer. This involves:

- Latency Management: Optimizing the speed at which agents communicate to prevent “System Stutter.”

- Context Window Auditing: Ensuring that the AI has the correct “Ground Truth” data from McKinsey and internal silos without exceeding the model’s memory limits.

- Error Propagation Mapping: Identifying where a single hallucination in a “Researcher Agent” corrupted the final output of the “Execution Agent.”

The “Human-AI Hybrid” Debugging Skill

Debugging in 2026 is no longer about finding a missing semicolon; it is about finding a “Logic Drift.” When an autonomous agent begins to optimize for the wrong metric—such as prioritizing “low cost” over “regulatory compliance” with the EU AI Act—the human must intervene. This requires a deep understanding of “Prompt-less Engineering,” where you modify the underlying weights or data sources of the agentic loop rather than simply typing a new command.

Data Provenance and “Clean-Room” Analysis

As AI-generated content saturates the web, the ability to verify Data Provenance has become a high-tier technical skill. The 2026 professional must be able to trace a data point back to its original biological source. Using decentralized protocols and “Clean-Room” environments to analyze data ensures that the careerist is building strategies on verified facts rather than “Model Collapse” echoes. This technical gatekeeping is the final barrier protecting the enterprise from automated misinformation.

The Value of “Human-Only” Skill Assets: A 2026 Competitive Analysis

By 2026, the global labor market has bifurcated into “Commoditized Task Execution” (AI-led) and “High-Value Strategic Synthesis” (Human-led), making non-automatable cognitive assets the only sustainable career moat. As Agentic Workflows reach 99% accuracy in technical replication, the economic value of a professional is now derived from the remaining 1%—the “Bio-Contextual” variables that silicon-based intelligence cannot simulate. For the Pragmatic Careerist, the competitive landscape is no longer against other humans, but against the “Average AI Output.”

The “Edge-Case” Specialist

Standard business scenarios are now solved instantly by Multi-Agent Systems. However, AI models struggle with “Black Swan” events—unprecedented market shifts or unique cultural nuances. The 2026 “Human-Only” asset is the ability to navigate these edge cases. According to the World Economic Forum, “Complex Problem Solving in Unstructured Environments” remains the #1 requested skill. This involves making high-stakes decisions when there is zero historical training data to guide an algorithm.

Comparative Value: Human vs. Agentic Systems

| Skill Dimension | AI Agent Capability (2026) | Human Principal Value | Market Premium |

|---|---|---|---|

| Data Processing | Instantaneous / High Scale | Contextual Filtering | Low (Commodity) |

| Strategy Design | Pattern-Based / Iterative | First-Principles Innovation | High (Strategic) |

| Ethical Auditing | Rule-Based (EU AI Act) | Moral Intuition & Nuance | High (Risk Mgmt) |

| Relationship Mgmt | Simulated Empathy | Authentic Trust & Networking | Extreme (Social) |

The “Authenticity Tax” and Social Capital

In a world flooded with synthetic media, “Biological Authenticity” has become a premium product. Clients in 2026 are willing to pay an “Authenticity Tax” for services verified to be human-led. This is particularly true in high-stakes negotiations and long-term partnership building. The Pragmatic Careerist leverages Social Capital—the offline, unrecorded relationships and “handshake” trusts—that Agentic Workflows cannot access or replicate via LinkedIn or email scraping.

Information Gain: The “Anti-Hype” Filter

One of the most valuable “Human-Only” assets in 2026 is the ability to act as an “Anti-Hype” filter. As AI agents are prone to amplifying digital trends and “echo-chamber” data, the human professional provides the “Ground Truth” reality check. By utilizing deep-link verified sources like McKinsey, a human can spot when an automated strategy is based on a “hallucinated consensus” rather than physical market reality. This ability to say “No” to an algorithmic recommendation is the ultimate mark of a senior executive.

Frequently Asked Questions: Future of AI

What are the most valuable non-automatable skills for professionals in 2026?

High-level strategic synthesis, ethical auditing, and complex negotiation remain strictly human domains. These roles require “Bio-Contextual” intuition and social trust. Algorithms currently lack the biological hardware to replicate authentic human-to-human relationship building.

How will the EU AI Act affect my daily workflow?

Compliance is now a technical requirement for all enterprise AI deployments. You must maintain transparent “Technical Passports” for every autonomous agent. Failure to document algorithmic decision-making results in severe global financial penalties.

What is the “50% Critical-Thinking Threshold” in modern workplaces?

It is a corporate mandate requiring half of all strategy sessions to be entirely “AI-free.” This prevents cognitive atrophy among staff. It ensures humans remain capable of first-principles reasoning during system failures.

How do Multi-Agent Systems differ from 2024 chatbots?

2024 bots were linear and prompt-dependent, whereas 2026 systems are autonomous and goal-oriented. These agents collaborate in specialized clusters to execute end-to-end tasks. Humans now act as orchestrators rather than simple prompters.

Is “Prompt Engineering” still a viable career path in 2026?

No, traditional prompt engineering has been automated by intent-aware agentic layers. The market has shifted toward “System Orchestration” and inter-model logic. Professionals now focus on architectural oversight and verifying data provenance.

What is DeAI and why is it becoming a corporate standard?

Decentralized AI allows firms to run models on local, private “Sovereign Data Clouds.” This ensures proprietary intellectual property remains off public servers. It mitigates the privacy risks associated with centralized Big Tech models.

Strategic Summary: The 2026 Career Moat

The transition from the “Generative Era” to the “Agentic Era” has fundamentally redefined professional merit. To remain a Pragmatic Careerist, you must move beyond the execution of tasks and into the management of systems. By prioritizing Intellectual Sovereignty, mastering the Orchestration Layer, and maintaining “AI-Free” cognitive habits, you secure a position as the essential Human-in-the-Loop. In a world of infinite automated output, the human who can provide a verified, contrarian, and ethical “Yes” or “No” is the most valuable asset in the enterprise.